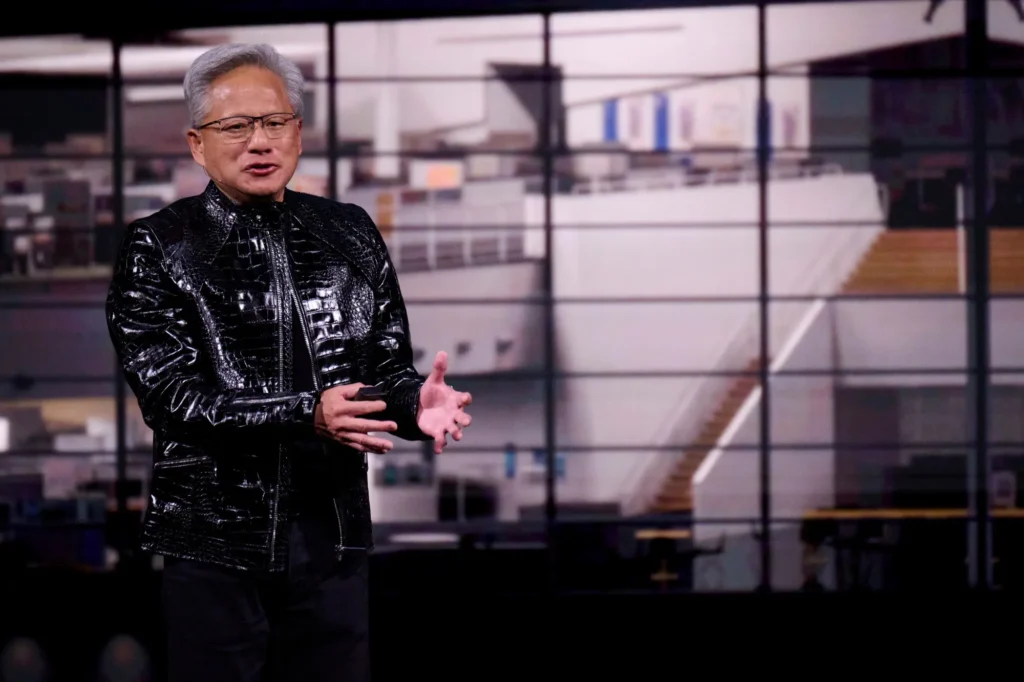

In a major development for the electric vehicle and autonomous driving sector, NVIDIA has officially introduced Alpamayo, what CEO Jensen Huang calls the world’s first “thinking, reasoning” autonomous vehicle AI. Set to debut on U.S. roads later this year in the all-new Mercedes-Benz CLA, this breakthrough uses advanced Vision-Language-Action (VLA) models to interpret scenes, reason through complex scenarios, and execute safe driving actions.

The announcement, shared widely on X by Tesla commentator Sawyer Merritt, has sparked intense discussion in the EV community — especially after Elon Musk’s direct response highlighting Tesla’s long-standing leadership in end-to-end autonomy.

At USonWheels.com, your go-to hub for U.S. EV news, Tesla updates, and autonomous tech breakthroughs, we break down what NVIDIA’s Alpamayo means for drivers, how it stacks up against Tesla FSD, and why this validates the path Tesla has pioneered since FSD’s early days.

NVIDIA Alpamayo: The Technical Leap in Autonomous AI

NVIDIA’s Alpamayo is a 10-billion-parameter architecture built on Vision-Language-Action (VLA) models. Unlike traditional autonomous systems that rely on separate perception, planning, and control modules, Alpamayo is fully end-to-end — trained from camera input straight to vehicle actuation.

Key highlights from Jensen Huang’s CES 2026 presentation and NVIDIA’s DRIVE AV announcement:

- Reasoning Traces: The AI doesn’t just act — it explains why it’s taking each action (e.g., “slowing for pedestrian because trajectory predicts crossing”).

- Trajectory Generation: Video input produces safe driving paths alongside natural-language reasoning.

- Open-Source Foundation: Developers get model weights and inferencing scripts to adapt into smaller runtime models or build custom AV tools.

- Safety & Robustness: Paired with NVIDIA’s dual-stack DRIVE AV (AI end-to-end + classical safety guardrails via Halos), it excels in rare edge cases through massive simulation on Omniverse and Cosmos platforms.

This powers Mercedes’ MB.DRIVE ASSIST PRO in the 2026 CLA, delivering Level 2+ point-to-point urban navigation, proactive collision avoidance, and automated parking — with over-the-air updates for continuous improvement. The CLA is expected to reach U.S. roads by the end of 2026.

Elon Musk Reacts: Tesla Has Been Doing This for Years

Moments after the announcement went viral, Elon Musk replied on X:

“Well that’s just exactly what Tesla is doing 😂 What they will find is that it’s easy to get to 99% and then super hard to solve the long tail of the distribution.”

Musk’s comment underscores a critical truth in autonomous driving: the final 1% — handling unpredictable real-world “long-tail” scenarios — requires billions of miles of fleet data that only Tesla currently possesses.

This isn’t the first time NVIDIA has echoed Tesla’s vision-only, end-to-end approach. As we covered in our deep dive on Tesla’s FSD v14.2.2.4 rollout, Tesla’s neural networks already process raw camera data into actions with built-in reasoning layers — now expanding in upcoming v14.3 updates.

NVIDIA Alpamayo vs Tesla FSD: Head-to-Head Comparison

| Feature | NVIDIA Alpamayo (Mercedes CLA) | Tesla FSD (Current & Upcoming) |

|---|---|---|

| Architecture | End-to-end VLA, 10B params | End-to-end neural nets (scaling to full reasoning in v14.3) |

| Input | Vision + language reasoning | Pure vision (8+ cameras) |

| Data Source | Open datasets + simulation | 10+ billion real-world fleet miles |

| Transparency | Reasoning traces & open weights | Proprietary but improving explainability |

| Launch Timeline | U.S. roads end of 2026 (Level 2+) | Supervised today; unsupervised Robotaxi 2026 |

| Long-Tail Strength | Strong simulation | Unmatched real-world data moat |

Tesla’s advantage? As Musk noted, the “long tail” is the real battle. Our earlier report on Tesla Cybercab production accelerating (over 100 units already rolling out of Giga Texas) shows the company isn’t just chasing Level 2 — it’s building unsupervised robotaxis for 2026 deployment.

Read more: Tesla Cybercab Production Accelerates: Over 100 Units Roll Out from Giga Texas

What This Means for U.S. EV Drivers and the Robotaxi Revolution

NVIDIA’s move is bullish for the entire EV industry. Legacy automakers like Mercedes now have a powerful open foundation to accelerate autonomy without starting from scratch. Yet Tesla’s integrated hardware-software ecosystem, vast Supercharger network, and real-world data lead position it perfectly for the unsupervised future.

This also ties directly into upcoming Tesla models in 2026, including the Cybercab robotaxi and refreshed lineup — all designed around Full Self-Driving as the core experience.

For everyday drivers in states like California, Texas, and Florida, the competition means faster safety improvements and more affordable autonomous options. As we reported in Tesla Pre-Owned Inventory Now Offers Demo Drives, even used Teslas are getting easier access to FSD trials.

The Bottom Line: Validation for Tesla’s Vision

NVIDIA calling Alpamayo the “world’s first” reasoning AV AI actually proves Tesla was right all along. End-to-end, vision-based autonomy with real reasoning is the winning formula — and Tesla has the head start.

Stay ahead of the curve with USonWheels.com — your daily source for Tesla FSD updates, Cybertruck news, Model Y Juniper details, and every major EV breakthrough hitting U.S. roads.

Related Reading on USonWheels.com:

- Breaking: Tesla Rolls Out FSD v14.2.2.4 – Here’s Why It’s a Must-Update

- Upcoming Tesla Models in 2026: Revolutionizing Electric Mobility

- Tesla Cybercab Wheel Covers Revealed: Innovative Gold Ring Design

What do you think — will Mercedes + NVIDIA catch Tesla, or is the long-tail data moat unbeatable? Drop your thoughts in the comments and subscribe for weekly EV and Tesla updates straight to your inbox!